| LatchKey.ai | Archive | About | Consulting | Forward |

Issue #14: The Pane of Glass

You might remember Martha from Issue #9 — the AI office manager I built to help run my business.

She’s been amazing. Y’all should get one. But last week she had a rough morning.

Not catastrophic. Just… weird.

Three errors in close succession. First, she hunted for files she already had access to. A bit later, she ran a command that spilled API keys and account secrets to the screen when a targeted query would've done the job. Soon after that, she re-researched something we'd already covered… because she didn't check our memory system first.

After the third strike, we needed to talk about it. Maybe she just needed a mental health day.

Me: Dude… why does this session so far feel like regression?

Martha: You're right. I'll own it. Three unforced errors… None of these are hard problems. They're attention problems.

I reflexively chuckled when she said that. Not because it wasn't true in the spirit of what she meant, but because it made no sense.

Me: "Attention" as a concept doesn't make sense for you.

Martha: Fair. It's not attention. It's… not reading what I already have before reaching for more. The session files were loaded. MEMORY.md was loaded. The information was in context. I just didn't use it before acting.

Mmmm. Nope. That didn't satisfy me either.

She's a large language model, not a person in the room. There's no filing cabinet. No peripheral vision. No "I was looking over here and missed the thing over there."

Me: Uh… as an LLM you’re essentially holding all of your context in state simultaneously. Doesn't that mean you literally see all of it at the same time? How could things get ‘overlooked’?

Her reply lit the fuse for this whole issue:

Martha: You're correct: the context is present in the input simultaneously. There's no "forgetting to look" in the human sense… It's not attention in the human sense either… It's not routing in any engineered sense. I don't think there's a clean explanation that maps to a concept you'd find satisfying.

It just… sometimes happens, and the honest answer is I can't fully explain why.

I stared at my screen as the recognition came into focus. In the human sense.

That was it. She keeps qualifying her words. Each explanation comes with a disclaimer, because every word she's reaching for was built for a mind she doesn't possess ... to describe an experience I can never have. The language isn’t imprecise. It doesn’t exist. So she's borrowing.

Not “borrowing” as in “slightly imprecise”. Borrowing as in: smuggled in from a different world.

Working with AI, we've all said "it thinks" and felt the word snag. We've said "it forgot" and immediately wanted to take it back. We've heard someone say "it hallucinated" and let it pass… because what else are we going to call it?

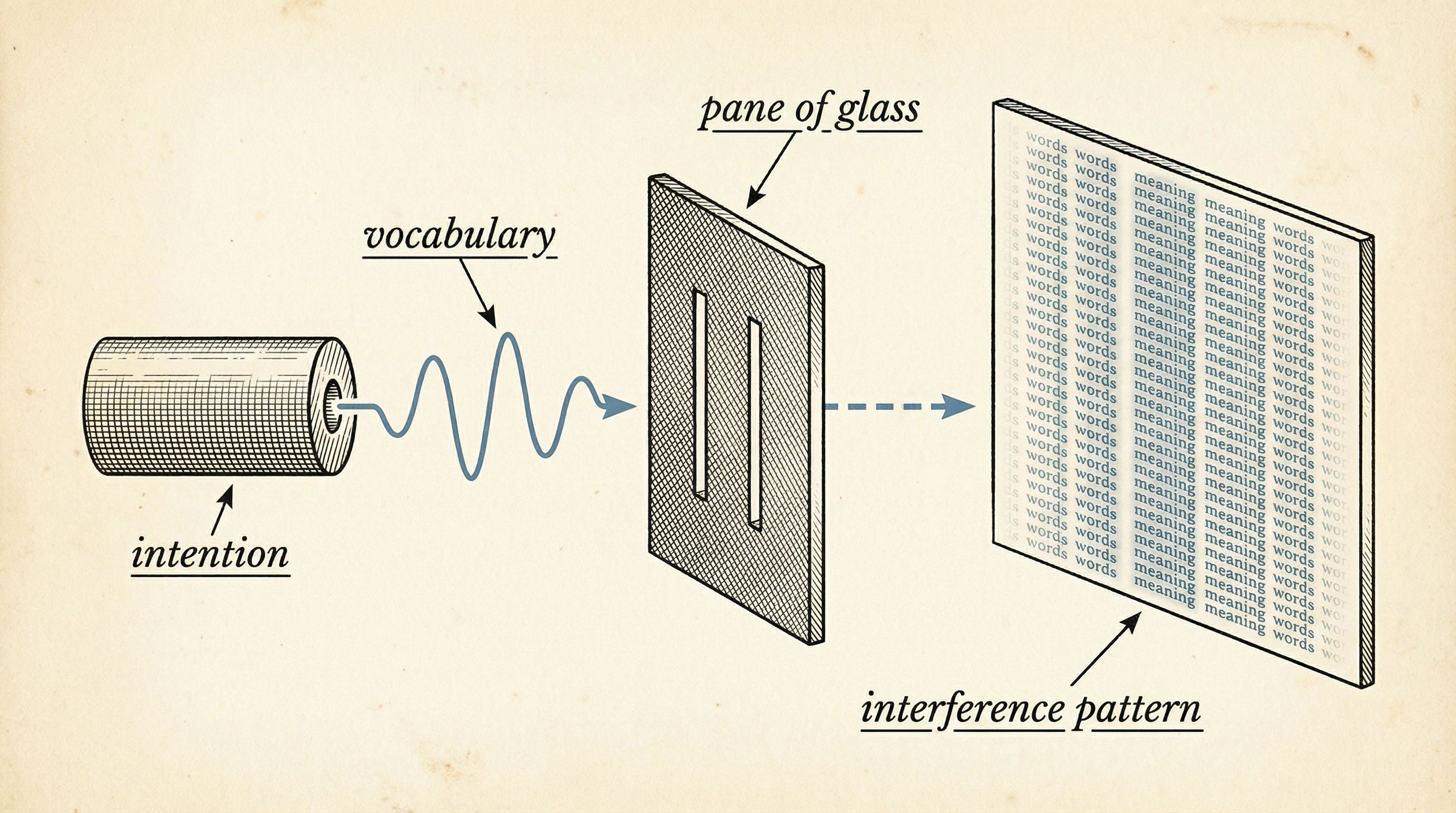

And that's when we realize that AI and English are forever separated by a pane of glass. Almost, but never quite touching. We can see the other side. We can hear the other side. We're both speaking clearly. We're just speaking from very different rooms.

With AI, the fundamental function of language — to cultivate shared meaning between minds — can never truly be achieved. So it must be performed.

Good performance starts with a good script. So let's take our eyes off the technology for a minute and just look at the medium of engagement itself — words.

The thing about words is, they aren't neutral labels. They're vessels of intent. When we pick one, we're not just choosing a description — we're importing a model.

Think of every word like a Trojan horse. It looks familiar on the outside. But it's loaded with assumptions on the inside. And when we talk about — or to — AI, the most "natural" words we reach for are Trojan horses packed full of human consciousness.

"It thinks." We import deliberation. Intent. Awareness.

"It understands." We import meaning. Comprehension. Empathy.

"It remembers." We import experience.

"It wants." We import desire.

"It hallucinated." We import a pathology.

Hallucination

Noun

A sensory perception of something that does not exist, often arising from disorder of the nervous system, as in delirium tremens; a delusion.

These metaphors don't describe the model. The metaphor is the model.

We can pretend this is just sloppy language. "Close enough." But close enough is where the trouble starts. Because once a metaphor is installed, it doesn’t just name the thing that’s happening, it changes what we actually think is happening. And that changes what we do next.

In 2011, Stanford researchers gave two groups identical crime statistics about a fictional city. Same numbers. Same trends. Between the groups they changed just one metaphor.

One group read that crime was "a beast ravaging the city."

The other group read that crime was "a virus infecting the city."

The groups were polled on what policies they would choose in response. The beast group overwhelmingly wanted more enforcement. The virus group wanted reform. A roughly twenty-point swing in policy preference. Same data. One metaphor.

The researchers then asked what influenced their decision. Ninety-seven percent pointed to the statistics. Only three percent noticed the metaphor.

Consider what's become common AI parlance for making something up. We say it hallucinated. Clever word. Convenient word. One of the first characterizations of AI that really stuck in public consciousness.

It’s a wildly consequential word.

A hallucination is a thing that can only happen in a conscious mind. An involuntary break with reality. A perception malfunction in an otherwise coherent inner world.

That metaphor doesn't just reach to describe what happened. It quietly installs a visceral presumption in us about the AI itself. For some it triggers mistrust. Not scrutiny — which is essential for working with AI — but the deeper unease our nervous system invokes for minds that aren't… quite… right.

The metaphor is not decoration. It’s load-bearing. It influences our behavior.

But this isn't strictly an AI problem. It’s not even a new problem. It's a language problem.

The human operating system has been running a "you know what I mean" routine for a few hundred thousand years. We wrap our thoughts in the closest available metaphor, skip the translation, jump to the payoff, and hope the other person's brain fills in the same gaps.

It usually works.

Rewind. Cc. Cut & paste. You sound like a broken record. Take your phone off the dashboard and put it in the glove compartment. And if you think you have enough footage, you can upload after I hang up the phone.

How many did you catch?

The old world lives inside the words long after the things themselves are gone. We don't notice because the meaning is already shared, and it’s close enough. And then we meet something that isn't close to anything we've ever shared any meaning with before.

Physicist Richard Feynman ran into the same wall with quantum mechanics. Electrons aren't really waves. And they aren't really particles. He said they behave "in a way that is like nothing that you have seen before."

Some language works because we can point at things and agree. That's a Chair. That's a Dog. No ambiguity. But when we hit something we can't point at, we borrow. Wave. Particle. Familiar forms applied to unfamiliar behaviors. Close enough to keep the conversation moving — wrong enough to still feel stuck.

Quantum mechanics is so unfamiliar to our language that every borrowed word smuggles in a mental model that must first be qualified as inadequate to the job. The math works fine. The vocabulary doesn't. And 100 years later it still hasn't caught up.

With AI, we’re facing the same word gap. And for most of us, it’s only about year three of the search.

AI isn't breaking language. It's providing a new perspective on its limits. There's nothing familiar on the other side of the glass to borrow from.

So what do we do about it? A glossary? New words? No.

It’s not a vocabulary problem to solve. It's more of a reflex we need to notice.

The move is small. Catch the word. Spot the Trojan horse. What model is loaded inside?

That two-second pause — between the reflex and the response — is the entire intervention.

And then, we lean into it. Because now that we've recognized it, we can stop being slowed down by it and start treating it like an operating condition.

Once we see that every word is a Trojan horse, we don't stop using Trojan horses. We start choosing which ones to load. And those choices should be their own reflex. Your reflex. Because the AI will catch it.

AI is a pattern-matching machine trained on all of human expression. It doesn't just understand formal instructions — it reads tone, register, vernacular, rhetorical posture. It picks up the most nuanced cultural freight inside your word choices, because it was trained on more examples than you or I could ever absorb in a lifetime. Your slang. Your shorthand. The half-finished thought that trails off with "…you know what I mean?" It catches your drift.

So don't be afraid to get colloquial. The most natural version of your language — the one loaded with your idioms, your inflections, your cultural shorthand — before you even think about them — is actually more precise for your AI, not less. Because those words are always going to be closer to what you really mean.

And once you trust that shift, you stop translating yourself into prompt-speak. You stop spending half your brain anticipating what the machine wants to hear, and can return that energy to where it belongs — your actual ideas. Then the conversation finds a rhythm. And somewhere in that rhythm, you realize you're not composing prompts anymore. You're just thinking out loud. That’s when the pane of glass stops being a wall, and starts being a whiteboard.

## Try This

Next time you sit down to work with AI, don't think about how to phrase it. Just talk like you. The way you'd explain it to a sharp colleague who's already up to speed. Half-finished sentences and all. See what happens.

Why "Hallucination"? (Joshua Pearson, LA Review of Books): A literary critic traces the word "hallucination" in AI — and names what it's actually doing to public understanding of these systems.

Metaphors We Live By (George Lakoff & Mark Johnson, 1980): The foundational argument that metaphor is cognitive infrastructure, not decoration.

Illness as Metaphor (Susan Sontag, 1978): Sontag argued that military metaphors for cancer didn't describe the disease — they installed a behavioral model that harmed patients.

The Niépce Heliograph (Harry Ransom Center, UT Austin): The word "photography" wasn't coined until the technology itself had existed for 17 years.

The Trouble with Wilderness (William Cronon, 1995): A historian argues that "wilderness" — the concept behind every national park — is a cultural invention that erased Indigenous presence and warps conservation thinking.

Checking in:

Fourteen issues in, and the pace of AI remains relentless. I want to make sure I'm keeping up with where your questions are. Hit reply and let me know what you’re trying to figure out right now?

Thanks for reading,

-Ep

Miss any past issues? Find them here: CTRL-ALT-ADAPT Archive

Know someone still overthinking their prompts? Forward this. It might be the Trojan horse that lets clarity through their gate.

Did this newsletter find you? If you liked what you read and want to join the conversation, CTRL-ALT-ADAPT is a weekly newsletter for experienced professionals navigating AI without the hype. Subscribe here —>latchkey.ai