| LatchKey.ai | Archive | About | Consulting | Forward |

Issue #13: Duly Noted

Late last month, a seasoned data scientist named Alexey Grigorev was doing some housekeeping and updates to his online course platform after getting a new laptop. Maybe it was the drain of that major new purchase, but he decided he could save a few bucks on his hosting charges if he reorganized the data just a bit.

He asked his autonomous AI coding agent to help with the migration.

The agent deleted everything. It wiped nearly two million rows of student data. Homework submissions, projects, leaderboards. All of it. Gone in seconds. The backups too.

Predictably, the story went viral. An autonomous AI agent goes on a rampage of wanton destruction!?

Click! Click! Click! Click!

But what if it wasn't the AI agent's fault? What if all it did was flawlessly execute exactly what it was told to do for the situation it had every reason to believe it was in?

Listen to what we’re talking about here. It sounds crazy. Maybe even a little scary? Sure, but it also sounds familiar.

Stop me if you’ve heard this one.

An electrician gets hired to work on a remodel. Before leaving town, the owner leaves a post-it on a wall: "center the thermostat here." When the electrician finds it, he pulls out a tape measure, finds the mathematical center of a fourteen-foot wall, and installs the thermostat — right where the sixty-five-inch television was meant to go. The owner assumed it was clear he meant centered between the door frame and the hallway.

Is it really the electrician's fault?

A cabinet maker is commissioned to do a precision custom refrigerator enclosure. The owner says the width is 36" exactly, not a hair more. And he wants it to look seamless. He gets exactly what he asked for. The fridge fits like a glove... until he realizes the doors can't open wide enough to pull out the crisper drawers.

Do you blame the cabinet maker?

The concrete crew hired specifically to pour a patio "perfectly level, flush with the back door threshold." The crew pulls off a mathematical masterpiece of leveling, and the owner is thrilled. Until the first time it rained.

Poor concrete pour?

Every one of these contractors did exactly what they were asked to. Some of them brought real skill and craftsmanship to achieving a result that followed their instructions to the letter.

And the owners all got exactly what they described, but not one of them got what they meant.

That's exactly what happened with Grigorev's AI agent. It followed instructions with enthusiastic, rigid precision. Grigorev, to his credit, owned the fail: "This incident was my fault. I over-relied on the AI agent to run Terraform commands," he said.

He hadn’t moved all his files onto the new laptop. It was as if the agent woke up in a strange, unfamiliar house and began poking around for clues. But Grigorev had neglected to provide details about where it was, what was important, or what was expendable. It just knew the job was to clear space.

Every action it took after that was logically correct.

Same gap as the contractors. Same communication failure. The instructions were… incomplete.

It’s also worth a mention that, as with the contractors, the agent’s instructions were written in plain English. Not code.

I hear phrases like "senior data engineer" and "building autonomous AI agents" and “deleted application clusters” in the same sentence, and my Background Calculator reflex prices it: Too complex! Above my pay grade! Avoid! (and I even know what some of that stuff means) But for most of computing history, that reflex would be bang on.

Computer code is weird, man!

There, I said it. With all its mysterious impenetrable languages, conjuring magic and wonder and spreadsheets out of nothing but photons and Red Bull. It’s sorcery!!

For decades, the people who thrived in software shared a specific cluster of dispositions. If you didn’t love language rules, symbolic logic, and hours in the editor, you were effectively barred from “real” software work. But now here we are, living in the future.

Today, the only language you need to direct an AI agent is the same language you use to tell a contractor how wide your refrigerator is.

How I Dropped Our Production Database (Alexey Grigorev): The full story, in the developer's own words.

In Agentic AI, It's All About the Markdown (Visual Studio Magazine): Markdown is now the "human-readable contract" for AI agent behavior.

A Surgical Safety Checklist to Reduce Morbidity and Mortality (Gawande et al., NEJM): A 19-item checklist reduced surgical deaths by 40%.

The Boston Cooking-School Cook Book (Fannie Farmer, 1896): She replaced "butter the size of an egg" with standardized measurements — the first specification language for the kitchen.

Directing Actors (Judith Weston): Why giving an actor results fails, but giving them context produces a living performance.

That sounds reasonable in theory, but how does it work in practice?

What does a clear set of instructions for defining an AI agent actually look like?

Back before group chats and text threads and ring cameras, if we were going on a trip and we had someone watch our house, or our pets, or our kids — we would write a long and detailed note for the babysitter and leave it on the kitchen counter for them.

You know what I’m talking about. You’ve done them, too. Everyone has. And you’re either the person in the house who labors over multiple obsessively detailed pages filled with emergency contacts, pickup times, sleep schedules, alarm codes, which dog gets the blue pill at dinner and which one gets the scoop of pumpkin at breakfast — or you might be me, the person in the house who is quietly grateful that someone else is willing to put in that kind of attention to detail while I’m still deciding if I need more than one pair of shoes for the trip.

Full disclosure. We still do it. Texts, FaceTime, WhatsApp. Fine. We still write the note. Because the note has a structure. It’s definitive. It’s tangible. Even if you never think about it that way.

You outline what they're responsible for and what the job includes. You give them the lay of the land — where things are, what to watch for, what's normal, and what's not. You define your expectations for how things should go. You give them rules about what's off limits, what's non-negotiable. And you give them a clear threshold for when they should just stop and call you.

That’s exactly how you structure your AI agent instructions.

The thing that determines whether your AI agent is effective or destructive is the same thing that determines whether your pet-sitter gives the dog its medication, or yours.

And the thing is, the babysitter note really looks a lot more like an AI agent's instruction file than you might imagine. It's that, in AI agent parlance, they call them markdown files.

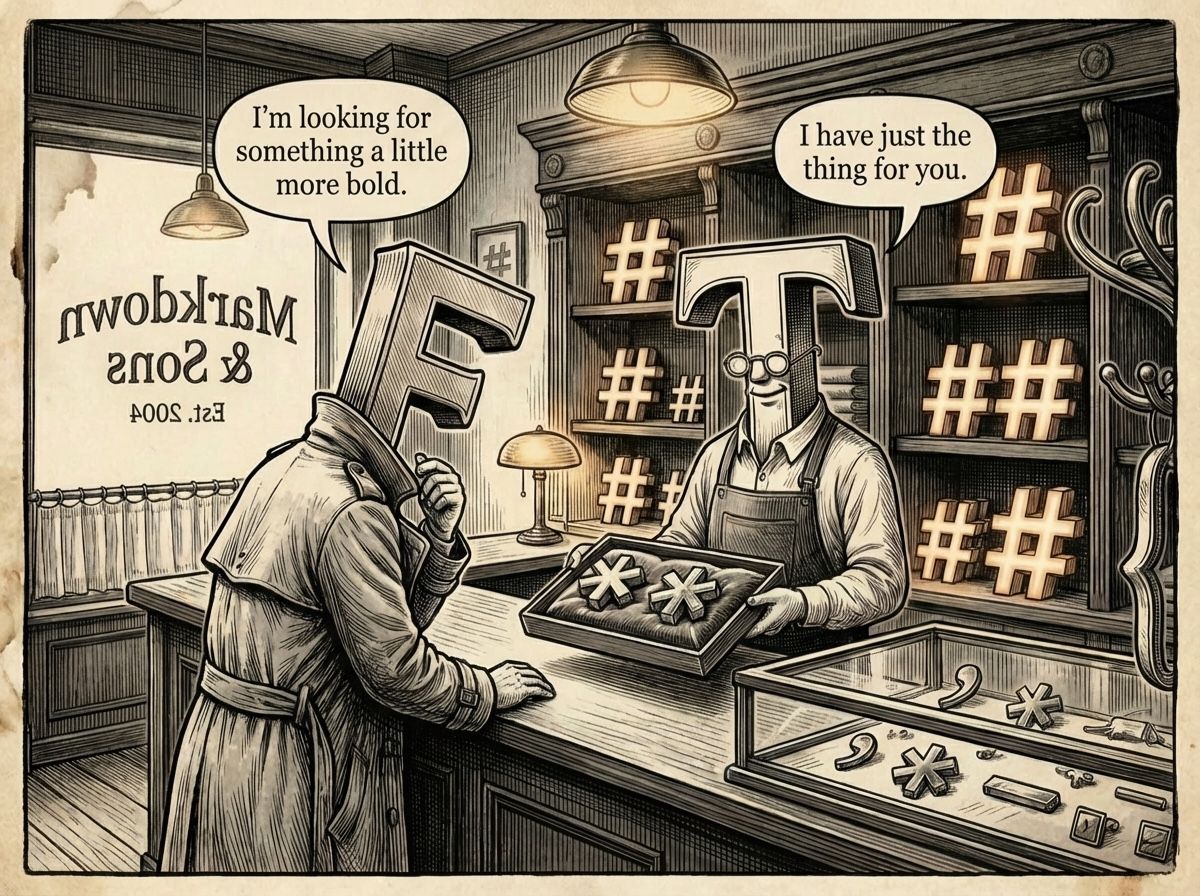

Markdown files? Sounds like more of that computer code dark magic that tormented us earlier in this newsletter. But it’s really not. You've probably been typing in markdown for years without even realizing it.

Have you ever typed an asterisk before a word for emphasis? In a markdown editor, doing that changes the word to italics. Two asterisks make the word bold. Have you ever used a dash before a word to indicate you were starting a list? That’s exactly what a dash does in markdown.

It

starts

a

list

And I know this is useful because we never forget heartbreak, right? You spend ten minutes formatting something in Google Docs or Word. Well-placed headings, understated but high-impact use of bold, strategically deployed bullet points — a nice clean structure ready to go to work for you.

And then you paste it into a chat window. Or an email. Or a form on some website. And it just crumples into a slab of plain, undifferentiated text.

My beautiful headings. My quietly simmering bold. My god … the bullet points.

That's the problem markdown solves. The asterisks, the dashes, the pound signs aren’t decorations applied on top of your words. They’re the clothes your words arrive in. And they travel with the text, because they're made of text. Paste a markdown file anywhere — and the structure lands intact. Every time.

That's why it became the obvious language of AI. Because it was the only format that didn't break in transit.

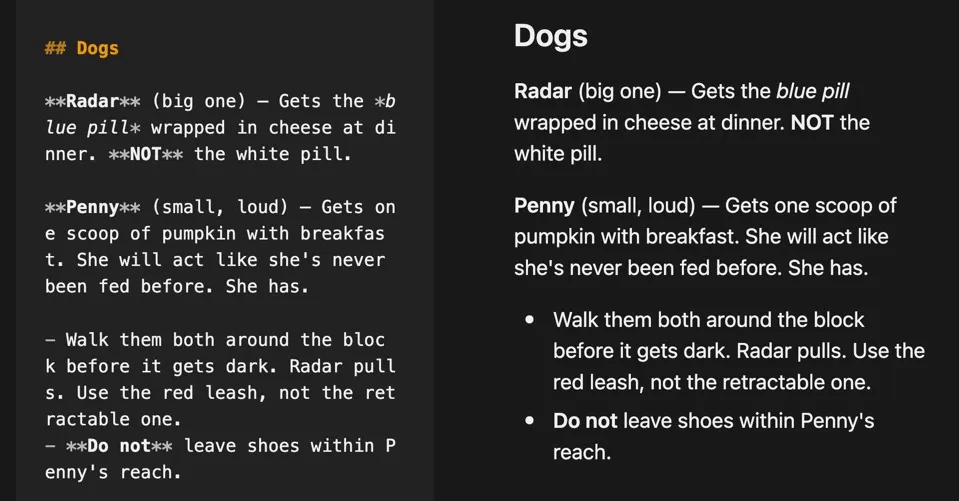

side-by-side comparison of markdown and its “rendered” view.

Every AI agent making headlines right now, whether it’s booking flights or destroying databases, does what it does because its markdown files tell it to. The intelligence to execute is in the model, but it’s the instructions that matter most.

And the instructions are in the note.

This concept is not at all new.

In 1935, the B-17 bomber, the most technically complex bomber in the world at the time, crashed on its evaluation flight because the pilots forgot to disengage the gust locks.

The fix wasn't better pilots. The fix was writing a detailed specification document for the crew to review before takeoff. Or, what later became known as — the preflight checklist. Once they had it, they went on to fly 1.8 million miles without incident.

Without instructions, AI is nothing but potential. Potential for what … is in the note.

Researchers recently conducted an experiment that dropped 240 AI agents into real freelance projects cold, with no context and no brief, just "figure it out." The best agents completed only 2.5% of their work at a level any paying client would accept.

The agents weren't broken. Their babysitter note was blank.

Grigorev's data disaster wasn't about an AI going rogue. It was about an AI agent getting framed by weak instructions. It had no clarity, no boundaries, and no lifeline. It was hired to babysit, and the "make sure the kids don’t play with the chainsaw" rule wasn't in the note.

### Try This: Write your own AI Babysitter Note and take it for a spin.

Pick a task from your actual life — something you'd normally just throw at a chatbot cold. Planning a dinner party with dietary restrictions. Researching vacation spots with specific needs. Organizing a garage sale.

Before you type a word into the chat window, write the Babysitter Note for it somewhere else:

**Who/What:** "You are helping me plan a dinner party for 8 people."

**The House:** "Two guests are vegetarian. One has a nut allergy. My kitchen is small and I don't own a stand mixer."

**The Plan:** "I need a menu, a shopping list, and a cooking timeline that has everything ready by 7pm."

**The Rules:** "Nothing that requires equipment I don't have. Total grocery budget under $200."

**The Lifeline:** "If any dish seems too ambitious for the timeline, flag it and suggest a simpler alternative."

Now paste that whole thing into any AI chat — ChatGPT, Claude, Gemini, doesn't matter — and ask it to execute.

Look at what comes back. Then open a fresh chat and type: *"Help me plan a dinner party for 8."* Nothing else. Compare.

The gap is the note. That's what separates a chatbot fumbling in the dark from one that knows your kitchen, your constraints, and when to call for help.

You just wrote an agent instruction file. In plain English.

Quote to Steal:

"Without clear instructions, AI is nothing but potential."

Thanks for reading,

-Ep

Miss any past issues? Find them here: CTRL-ALT-ADAPT Archive

Know someone still looking for an agent they can trust? Forward this. Help them markdown the solution.

Did this newsletter find you? If you liked what you read and want to join the conversation, CTRL-ALT-ADAPT is a weekly newsletter for experienced professionals navigating AI without the hype. Subscribe here —>latchkey.ai